Microsoft plays 1. ... c6

PowerBI has made the first defensive move to protect, or even grow, marketshare as LLMs disrupt BI

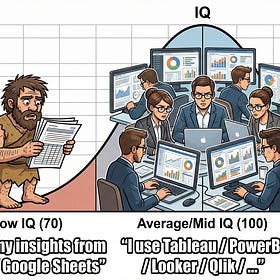

I have been writing a fair bit here on how LLMs are going to massively disrupt business intelligence. My strong hypothesis is that LLMs will allow for dashboards to get hyper-personalised, which means that currently popular tools such as Tableau and PowerBI might lose marketshare to code-first open-source BI tools.

Here is some of my old writing on the topic:

Dashboards will get leapfrogged

Back in 2022, when I was leading what was internally known as the “BI Team” at Delhivery, I decided we needed to live up to our name and build some dashboards. I wrote “instilling a culture of management dashboards” in our annual operating plan, and made grand plans to hire a team to do this.

Anyway, last night I came across this blogpost here that talks about some fairly big changes that Microsoft is already making in PowerBI - it is going code first!

It was a rather eye-opening post for me - not only because it talks about the recent changes in PowerBI, but also how PowerBI actually worked (I’ve never really worked on either Tableau or PowerBI, despite having held a “SVP of BI” title not so long ago).

As many of you might already know (but I didn’t!!), traditionally, PowerBI has been a “visual dashboarding tool”, which means that “developers” don’t really write code but actually drag and drop widgets on a screen. There is a complicated and proprietary PBIX format for storing the dashboards, and these are binaries that cannot be read by anything / anyone but PowerBI itself.

This has meant that dashboarding on PowerBI has not been able to follow standard software engineering practices (the horror! the horror!!) such as code reviews, version control, QA, etc. Like Bergevin writes:

Collaboration is really difficult. What usually happens in the enterprise is that one person builds a project end to end - they alone know how they built it, what the intent is, what work arounds they had to do, etc. It’s a single point of failure. No shared codebase. No team-based code management. If we are lucky there’s a Confluence page or data catalog article that summarizes some of the context, bu often that doesn’t exist either

Like I said, “the horror, the horror”!!

Anyway now Microsoft seems to be finally changing, thanks to LLMs. Proprietary formats and visual drag-drop dashboards work well when you give PowerBI away for free / cheap for customers who are already on the Microsoft suite (think mostly large enterprises). They work less well when your customer teams realise that they are incompatible with LLMs and vibe coding.

LLMs, by definition, are large language models, which means that their learning is contingent on data being available in the form of language, or text. Code is a form of text (and it is, in a sense, verifiable) which explains why LLMs have gotten so good at it.

And the only way that LLMs can help you make dashboards is if they have a corpus of training data for building such dashboards. Thanks to PowerBI’s proprietary file formats, so far no such data exists. Which means that if you’re vibecoding a dashboard from scratch, LLMs won’t be able to actually build it for you - not only does the training data not exist, but the tools being GUI-based makes it harder for a language model to operate.

I asked this question to Claude, and this is what it had to say:

The more interesting question (and I suspect where you’re really headed): does it have to be Power BI? If the goal is LLM-generated, per-user, zero-manual-input dashboards, you might get there faster with something like Metabase (open-source, proper API), Apache Superset, or even Evidence.dev (dashboards-as-code in markdown — very LLM-friendly). The less proprietary the format, the easier the generation pipeline.

In this context, Microsoft’s move to make PowerBI a more code-based tools is a very strong step, and shows where BI is going. Like Bergevin writes, what we have now is just a half step (the dashboard still needs to be created using the UI, and that can’t happen on a Mac), but assuming Microsoft walks the talk, soon we will see thousands of github repos littered with PowerBI dashboard building code.

Presently, LLMs are going to learn from it, and then if you are already a Microsoft shop, and you want to make more modern (i.e. hyperpersonalised) dashboards, you will still be able to do it using PowerBI - once dashboards can be written as code, LLMs can directly build them for you!

My sense is that Microsoft is unlikely to have gone down this path alone - the likes of Tableau and Looker as well won’t be far behind (Tableau already has an MCP server), and soon we will find that the whole “artisanal dashboarding by hand” (drag-drop, one size fits all) will be a thing of the past.

If you are a BI “Engineer”, this means you better start getting comfortable with writing code, and not just SQL. You also better (slowly) start getting comfortable with software engineering practices, since they will become a part of the standard BI workflows.

If you are an engineering leader (or CTO), you know that soon your BI teams can also start using good software engineering practices, and hopefully that means better RoI for you from them. So far you haven’t been able to do much about their reluctance to vibe code, but soon they will need to get efficient in that aspect as well.

And if you are a (reluctant) user of dashboards, you know that soon things are going to get hyperpersonalised, even if you are a Microsoft or Tableau shop.

And if you are an open source competitor (Metabase / Superset / Lightdash / …. ) you better get your enterprise sales act together and get them to move to you now to hyperpersonalise dashboards, before they will be able to do the same using the tools they are already using. Maybe you have a year or two in this.

I have a few points which I want to make here :

firstly, this below is not a correct understanding of How PowerBI works and whether any code is written or not.

As many of you might already know (but I didn’t!!), traditionally, PowerBI has been a “visual dashboarding tool”, which means that “developers” don’t really write code but actually drag and drop widgets on a screen. There is a complicated and proprietary PBIX format for storing the dashboards, and these are binaries that cannot be read by anything / anyone but PowerBI itself.

PowerBI uses different technologies for the different layers:

1.Data Ingestion & Cleaning - something called M code or M Query - which is Microsoft proprietary SQL lite version for connecting to data sources and doing basic cleaning before you ingest ( M can be used within Excel in the same way) - there is some M code to be written here

2. Data Transformation - Once the data is in you use something called DAX to create measures and calculated columns. This is done in DAX which takes in attributes and you can specify simple logic ( though you can write complex logic if you want)

3. Data Visualization - Finally once the attributes are in the form you need, you create visuals and you format them and can also do some conditional formating and all the other stuff like visual level filtering - there is no code in this layer but needs a through understanding of the underlying two layers

So in summary to have a fully automated functional dashboard - you do have to write code which is M query and DAX. and it is not trivial.

coming to your second assumption that ..."They work less well when your customer teams realise that they are incompatible with LLMs and vibe coding" - basically that no code = no text to train on. That is not true. Ever since my teams got reduced, I have been vibe coding PBI dashboards from scratch and it is as good for that purpose as say SQL or PySpark which I also use LLMs for.

The problem that this new update in PBI is solving is the SDLC one. essentially all the code is on the PBIX file ( which you can't read if you dont have PBI for desktop) . There is no common code-repo structure where all the code resides and which can be checked-out, no branch and master setup and so on. code quality is a huge problem. The way current teams try to solve this is to keep the M code and DAX layers as 'thin' as possible - essentially shifting all the data transformation to the backend system with proper SDLC setup and just the visual layer in the PBIX file.