Choosing an LLM

Forget benchmarks. You need to choose the LLMs that does best for your use case.

There are some words in the LLM world that have started to infinitely irk me. I just don’t like them. And I find it irritating that everyone else seems to always talk about them. Things like:

SOTA (for state of the art)

Benchmarks

Leaderboards

etc.

There is nothing LLM-specific about this irritation. In general (and you might find it surprising since I’m a data guy) I don’t like metrics. I don’t like to reduce evaluations to a single number when it’s possible to evaluate more holistically.

At my last job, for example, one constant point of contention between product managers and me was in terms of metrics to determine if an algo was working - I would keep harping on “look at some outputs and you’ll get a feeling if it’s alright”, something that most others didn’t subscribe to.

In any case.

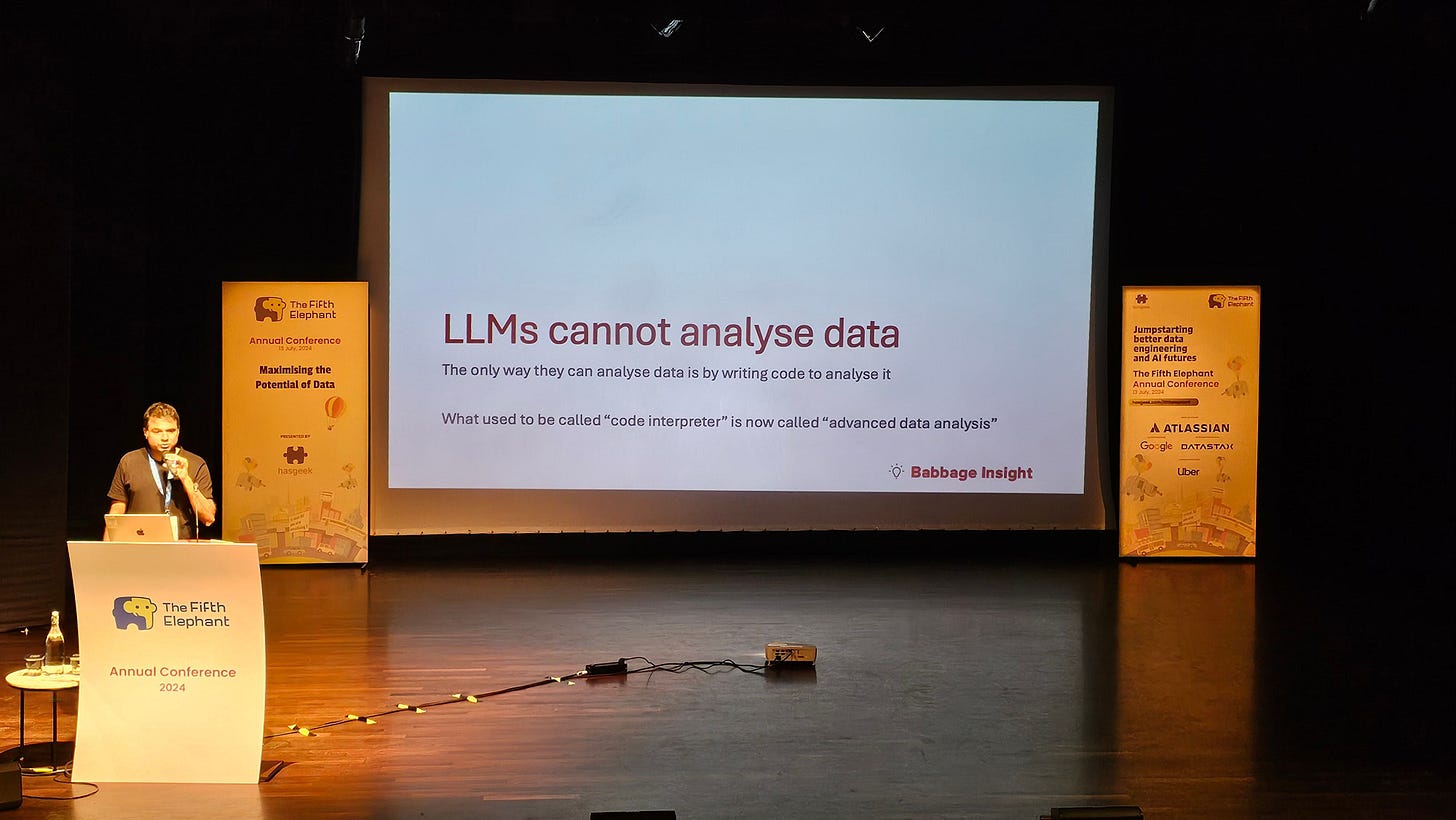

LLMs analysing data

As you know, we are building an AI data analyst (hey, we need some help in better branding. If you have any bright ideas, let me know!). As I had mentioned in my previous post, the broad framework is that we use an LLM to generate ideas and translate these ideas into code that a deterministic checker can run. And then we have a deterministic checker (also an algorithm) to evaluate and rank these ideas.

To get around the prompt engineering bottleneck, we are using this system called DSPy (one of my big personal engineering achievements of last week was to write the data analysis code, including the verifier, entirely in R, while invoking DSPy!). DSPy constructs the prompts and sends it to the LLM.

So far we’ve been largely using OpenAI (4o). Today I wanted to experiment with open source models (given that our ultimate aim is to deploy our solutions on-premise). And so I made minor changes to the code to hit the Ollama versions of open source models running on my laptop (I have a base model 14” Macbook M1 Pro). And the results were very very different.

In any case - we are using LLMs to generate code to analyse data.

This is the prompt as generated by DSPy

--- Follow the following format. Question: ${question} Data: ${data} Answer: JSON --- Question: Present a list of 'cleaning_methods' (in R) where every one of the cleaning methods to clean the data is listed, along with specific python code to implement that particular cleaning method for the relevant underlying field. Go beyond obvious things like filling in missing values and dropping duplicates. Make sure column data types are accurate. The purpose of cleaning is to get insight from data, not machine learning. So ignore things like normalisation. Also, don't suggest deleting entire rows unless they are all NA. If there are outliers, replace them rather than remove the rows. And only replace gross outliers (maybe an order of magnitude difference). If there are missing values, fill them in rather than remove rows. If all values in a row are missing, delete those rows. Use tidyverse where possible, with all parts of the code in a single chain. Instead of importing packages, use the "dplyr::mutate" kind of format. Assume the dataframe is called "df". Each line of code needs to have a LHS and a RHS This must be a JSON list with rows in the following example format: {"cleaning_method": "METHOD_NAME", "column": "COLUMN NAME", "code": "<insert R code here>"}. The results can and must be any combination. The entire output should be a single parseable JSON. before outputting it, revalidate it to ensure the entire output is fully valid JSON. Ths JSON should be ordered in a logical manner - assuming we will apply each cleaning method in order. So for example, if you want to remove rows with all NAs, that should appear before any other NA treatment Data: Latlong Source Something SomethingElse Temp Index DT 0 (12.9716, 77.5946) gfs 869.91 5.5 20.05 1688688000 2023-07-07 00:00:00 1 (12.9716, 77.5946) era5 869.91 5.5 31.33 545029200 1987-04-10 05:00:00 2 (12.9716, 77.5946) era5 869.91 5.5 19.45 825454800 1996-02-27 21:00:00 3 (12.9716, 77.5946) era5 869.91 5.5 31.05 1585227600 2020-03-26 13:00:00 4 (12.9716, 77.5946) era5 869.91 5.5 22.11 939182400 1999-10-06 04:00:00 5 (12.9716, 77.5946) era5 869.91 5.5 17.76 946749600 2000-01-01 18:00:00 6 (12.9716, 77.5946) era5 869.91 5.5 24.08 1013230800 2002-02-09 05:00:00 7 (12.9716, 77.5946) era5 869.91 5.5 21.53 1185854400 2007-07-31 04:00:00 8 (12.9716, 77.5946) era5 869.91 5.5 31.92 639122400 1990-04-03 06:00:00 9 (12.9716, 77.5946) era5 869.91 5.5 27.34 1088679600 2004-07-01 11:00:00 10 (12.9716, 77.5946) era5 869.91 5.5 33.28 1330855200 2012-03-04 10:00:00 11 (12.9716, 77.5946) era5 869.91 5.5 28.05 1460595600 2016-04-14 01:00:00 12 (12.9716, 77.5946) era5 869.91 5.5 24.91 1600437600 2020-09-18 14:00:00 13 (12.9716, 77.5946) era5 869.91 5.5 27.37 594370800 1988-11-01 07:00:00 14 (12.9716, 77.5946) era5 869.91 5.5 21.79 621277200 1989-09-08 17:00:00 15 (12.9716, 77.5946) era5 869.91 5.5 28.95 904467600 1998-08-30 09:00:00 16 (12.9716, 77.5946) era5 869.91 5.5 19.65 720136800 1992-10-26 22:00:00 17 (12.9716, 77.5946) era5 869.91 5.5 25.84 1053806400 2003-05-24 20:00:00 18 (12.9716, 77.5946) era5 869.91 5.5 22.09 1181073600 2007-06-05 20:00:00 19 (12.9716, 77.5946) era5 869.91 5.5 31.58 920710800 1999-03-06 09:00:00This is what OpenAI (4o) returns:

[ { "cleaning_method": "Remove rows with all NAs", "column": "all", "code": "df <- df %>% filter(rowSums(is.na(.)) != ncol(.))" }, { "cleaning_method": "Replace missing values with column median", "column": "Temp", "code": "df <- df %>% mutate(Temp = ifelse(is.na(Temp), median(Temp, na.rm = TRUE), Temp))" }, { "cleaning_method": "Replace gross outliers in Temp", "column": "Temp", "code": "df <- df %>% mutate(Temp = ifelse(Temp > 100 | Temp < -100, median(Temp, na.rm = TRUE), Temp))" }, { "cleaning_method": "Convert DT column to Date type", "column": "DT", "code": "df <- df %>% mutate(DT = as.Date(DT))" }, { "cleaning_method": "Convert Index column to integer", "column": "Index", "code": "df <- df %>% mutate(Index = as.integer(Index))" }, { "cleaning_method": "Split Latlong into separate Latitude and Longitude columns", "column": "Latlong", "code": "df <- df %>% separate(Latlong, into = c('Latitude', 'Longitude'), sep = ', ', convert = TRUE)" }, { "cleaning_method": "Ensure Source column is a factor", "column": "Source", "code": "df <- df %>% mutate(Source = as.factor(Source))" }]This is fairly good for what we need.

This is what Llama 3.1 8B returns

Answer: It appears you're trying to analyze a block of text that represents weather data in a specific format. I'll do my best to help you with that. The text seems to be a list of 19 entries, each representing a weather observation at a specific location (12.9716, 77.5946) on different dates. The format is consistent across all entries, with the following fields: 1. Entry number (1-19) 2. Model used (era5 or gfs) 3. Pressure value (869.91) 4. Temperature value (in degrees Celsius, e.g., 5.5, 20.39, etc.) 5. Time stamp in Unix epoch format (e.g., 1386471600, 2023-08-28 03:00:00) To answer your question, I'll need more context or a specific query to focus on. Could you please provide more information about what you're trying to achieve with this data? Are you looking for: * A summary of the temperature values across all entries? * A comparison of temperature values between different models (era5 vs gfs)? * Something else? Please let me know, and I'll do my best to assist you! It completely sidestepped the specific prompt, and gave its own thing. Manu had this hypothesis that Llama 3.1 70B might be better.

Llama 3.1 with 70B parameters (on Huggingface)

Using the HuggingFace hub, this is what we got:

[ { "cleaning_method": "Remove rows with all NAs", "column": "All", "code": "df %>% drop_na()" }, { "cleaning_method": "Convert Latlong to numeric", "column": "Latlong", "code": "df %>% dplyr::mutate(Latlong = gsub('\\(|\\)', '', Latlong) %>% strsplit(', ') %>% sapply(function(x) as.numeric(x)))" }, { "cleaning_method": "Convert DT to POSIXct", "column": "DT", "code": "df %>% dplyr::mutate(DT = as.POSIXct(DT, origin = '1970-01-01'))" }, { "cleaning_method": "Replace outliers in Temp", "column": "Temp", "code": "df %>% dplyr::mutate(Temp = ifelse(Temp > 40, 40, ifelse(Temp < 0, 0, Temp)))" }, { "cleaning_method": "Replace outliers in Index", "column": "Index", "code": "df %>% dplyr::mutate(Index = ifelse(Index > 100, 100, ifelse(Index < 0, 0, Index)))" }, { "cleaning_method": "Fill missing values in Temp", "column": "Temp", "code": "df %>% dplyr::mutate(Temp = ifelse(is.na(Temp), mean(Temp, na.rm = TRUE), Temp))" }, { "cleaning_method": "Fill missing values in Index", "column": "Index", "code": "df %>% dplyr::mutate(Index = ifelse(is.na(Index), mean(Index, na.rm = TRUE), Index))" }, { "cleaning_method": "Convert Something and SomethingElse to factor", "column": "Something", "code": "df %>% dplyr::mutate(Something = as.factor(Something))" }, { "cleaning_method": "Convert Something and SomethingElse to factor", "column": "SomethingElse", "code": "df %>% dplyr::mutate(SomethingElse = as.factor(SomethingElse))" }]Again closer to what we want, but this ignores the specific prompt that the code needs to have a LHS and RHS - this only has the RHS.

I’ve tried CodeLlama (running on my system). Llama 3 (on my system again). Neither impresses.

Model evaluation

What I’m using this long-winded extra-long post (thanks to all the prompts and replies) to get at is - model evaluation is sensitive to your context. You might be looking at external benchmarks and leaderboards and all that, but what ultimately matters is - which system is best for the task at my disposal?

[…] anyone who has ever built an index (apart from a free float market cap weighted asset price index) will know that you can cleverly choose weights to give you the answer you want. It is similar with benchmarks

For example, based on my limited explorations today, it appears that for our task of generating code to analyse data, OpenAI might be our best bet (I’m yet to try 4o-mini) (though I’ll possibly need to find something else for customers who are particular about an on-prem deployment). And I have arrived at this by looking at my specific tasks.

The thing with benchmarks is that they can be gamed - anyone who has ever built an index (apart from a free float market cap weighted asset price index) will know that you can cleverly choose weights to give you the answer you want. It is similar with benchmarks. It is easy to provide benchmarks to make your favourite system win.

A more general note

What I’ve got at in this post is - different LLMs are good for different tasks, and without really trying them out, it is really hard to know. And this makes it hard if you are providing a general purpose chatbot, or what people derisively call a “thin layer around an LLM”.

If the kinds of questions you ask your LLM varies considerably, the issue is that the choice of model may not be the right one for many of those questions. And that could result in subpar performance in general.

This final bit is largely aimed at VCs (a bunch of them subscribed to this newsletter after we had met them in March and April) - some of them refused to invest in us saying we are “not sectoral enough”. I can understand their decision from the point of view that you can’t build everything for everyone.

However, the way I’d want them to think of LLM-based companies is - is there a coherent set of questions that this product asks of LLMs, specific enough that a particular LLM (or set of LLMs) is best at answering it? If the answer is yes, then the system can be super optimised, and a good product can be built. If you have too many kinds of questions you want to ask of LLMs, the inevitable tradeoffs in picking the model means that you might end up with a randomly subpar product (see my old post on why LLMs are arbit).