More on LLMs freaking out

Some updates on product development at Babbage, and some hilarity on LLMs' intelligence.

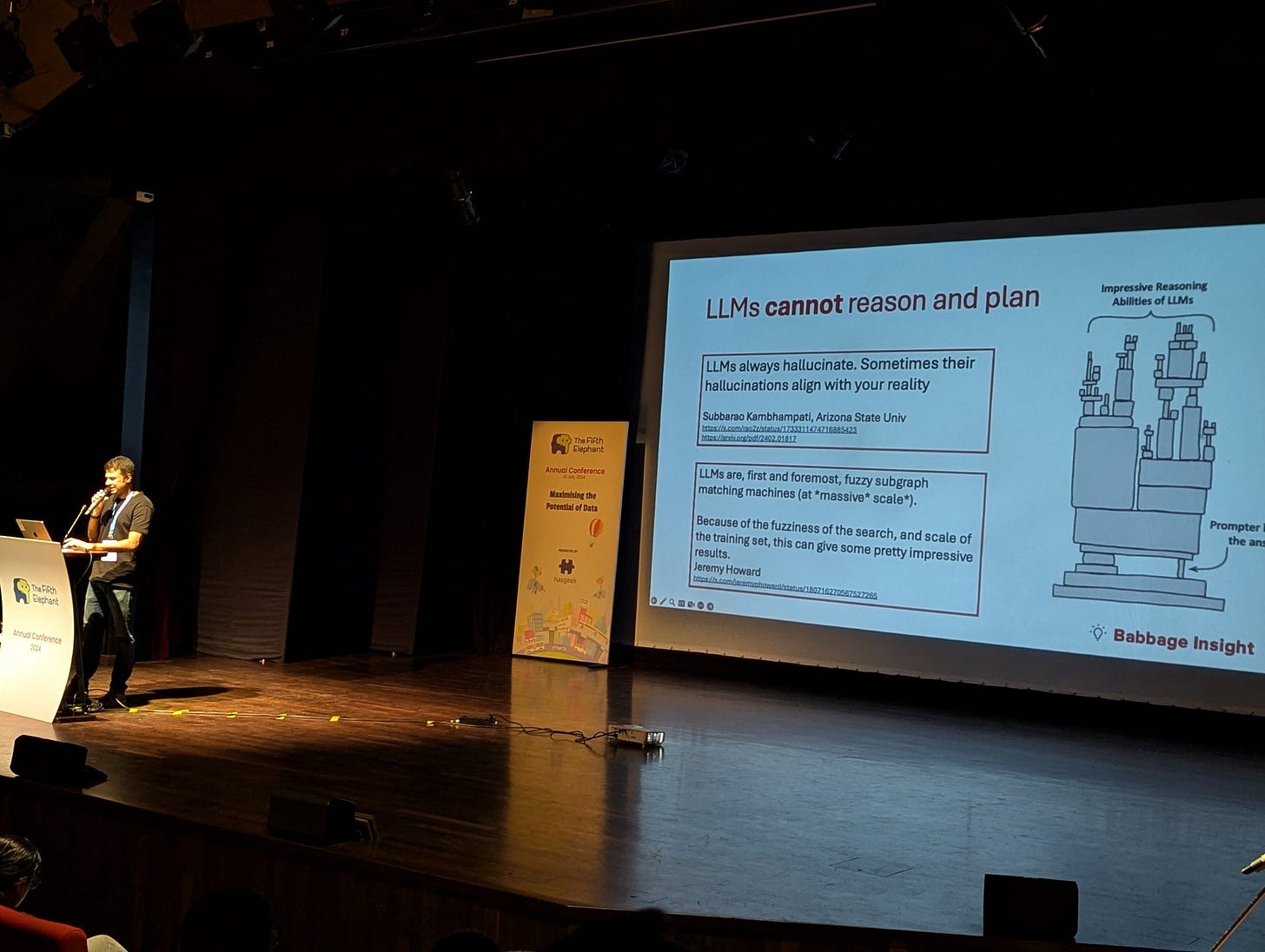

Over the weekend I gave a talk at The Fifth Elephant. I spoke about the work we’ve been doing here at Babbage Insight, about building an AI data analyst.

I THINK the talk was well received. At least, there were LOTS of questions immediately following my talk, and a whole bunch of back channel conversation later on.

I spoke about what is now to me “the usual stuff” - that LLMs cannot analyse data, that LLMs are freaky in terms of what they are good and bad at, that LLMs cannot reason and plan, and about how the best way to use LLMs is to pair them with a deterministic “verification system” - what Subbarao Kambhampati of ASU calls “LLM Modulo framework”.

The talk was recorded, though I’m told the recording will be behind the Hasgeek paywall (for “members” only - actually it’s there already, for members). I’ll see how much of it I can share. Meanwhile, here are the slides I used.

The conference was excellent. The crowd was great. I met (and re-met) a lot of people. From Twitter, I got to know that I had missed meeting a lot of people I would’ve otherwise wanted to meet. If I had any cribs, the only one was that there was no collar mic provided - I had to choose between being stuck to the podium mic or using a handheld. I took the latter.

The gist of the talk was about how we can get around the limitations of LLMs and get them to analyse data. So far, in the course of our work at Babbage, we have focussed on using LLMs to clean data. This is a good representative task to start off with because it is a well-contained task, and cleaning is a microcosm of the entire data analysis process.

From the talk perspective it wasn’t a great idea as many in the audience weren’t able to appreciate that cleaning is an “intelligent” process. Then again, if you want to be honest, you only want to talk about stuff that you’ve actually done.

In any case, even after some training and retraining, and having deterministic checkers to make sure LLMs don’t run wild, there are some interesting results. One of the runs today produced his hilarious result.

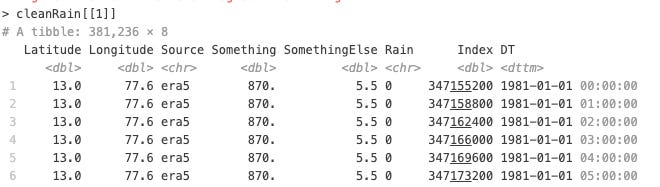

This is a screenshot of the original dataset - a dataset of hourly rainfall in Bangalore for the 40 odd years.

After going through the algo as it stands now, this is the output (or this is the output after ONE of the runs, given that the algo is necessarily stochastic):

Most impressively, the latlong column has been converted into two separate numeric columns. If you were to see this alone, you would think that LLMs are truly impressive and that your job as a data scientist is at risk. After you have let your heart skip a beat, you can look at the rest of the columns.

You will notice that the last column (“DT”) has been converted into GMT. Very impressive cleaning. Something and SomethingElse (I had named these columns since I didn’t know what they meant in the dataset) have been converted to numeric type.

Index is funny - in one iteration, the LLM (we’re using GPT-4o) recognised that this must be an Epoch time, and immediately converted it into a datetime object. And in the next iteration, the LLM, for whatever reason, decided to roll back the change and convert it to a double instead!! (and our algorithm isn’t good enough yet to prevent such stuff from happening)

The funniest, though is Rain. In its infinite wisdom, which includes noticing that Index is an epoch time, and that Latlong needs to be split into two columns, the LLM decided to keep Rain (mm of rain each hour) as a character column! The algorithm ran for multiple iterations, but not once did it figure that Rain needs to be converted to numeric!

Go figure.

I tried running this whole algorithm again to see if I’d noticed some freak effect. In the next run, Rain was duly converted to numeric. And then the LLM, in its infinite wisdom, decided to "remove outliers” - all instances of Rain above 10mm per hour were replaced with the median value.

Yes, we are in the beginning stages of our product development. And yes, your job as a data scientist is still safe. And AGI isn’t happening any time soon.

PS: If you were wondering looking at the screenshots above, yes, I use R. Programming in Python is like speaking in Hindi for me - I’m capable of doing it, but it draws a lot of mental energy and I’d like to avoid that unless absolutely necessary. My “achievement” for yesterday was to figure out how to leverage GPT-4o and the DSPy package while retaining most of the programming in R.

is data analysis even a good use case for encoder-only transformers which is trained to identify word vector closeness?