The Art of Data Science: Chapter Twelve

Hello!

I know I'm a bit late on this one, but upon carefully reviewing my New Year Resolution, it turns out that I had only resolved to send out one newsletter each calendar month, and had made no promises on when it would be sent! So I'm not guilty for sending this out on the 15th!

Anyways, I've made a career switch. After a brief experiment with employment, I decided to move on and re-start my Strategy and Data Consulting business. This time I've formally incorporated in the UK, though I'm happy to take on customers anywhere. In fact, my first client from this re-started business is a Singapore-based investment management marketplace.

Now on to real stuff..

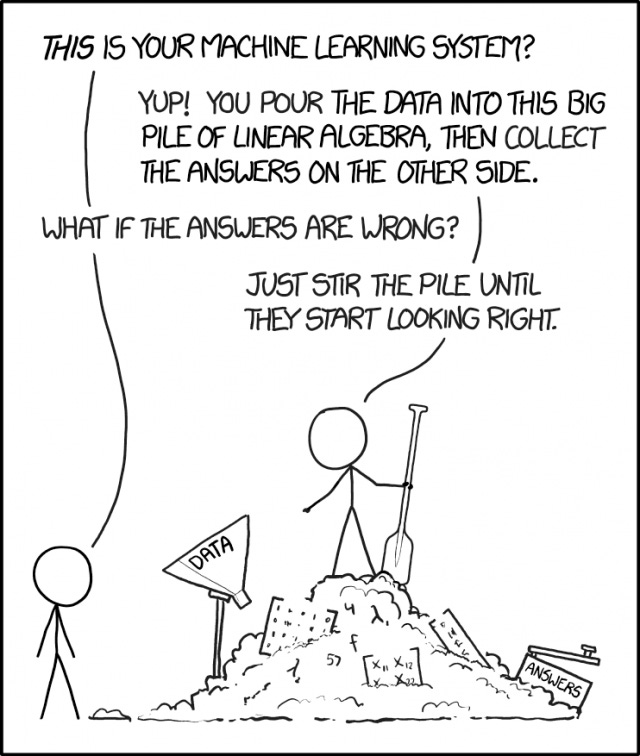

Machine Learning is easy

Sometime last year, the online comic XKCD published this strip on machine learning, which hit a raw nerve for lots of data scientists. I'm sure I've shared it here at least once, and I've shared it on my blog at least five times, and on LinkedIn at least twice. Now, I've shared it here at least twice! :)

The best thing that this comic has done (in my opinion) is to give a name to the way data science is practised - "stirring the pile". Sometimes just giving something a name gives you a platform you can use, and I've been using the platform of this cartoon to occasionally rant about the way data science is done. And of late, I've been taking this rant to another level!

Leaving rants aside, I think one reason why stir-the-pile data science has thrived is that organisations are yet to fully properly integrate their data science teams. As I'd written earlier (I don't remember where, now), in most organisations there is a sort of gulf between the business and the data science team.

In general, the business side of the organisation seldom understands the data side, and instead either treats the data scientists as gods (in which case any input from data science is taken without questioning) or as "a bunch of nerds doing some supposedly cool stuff" (in which case anything they do is largely ignored). In either case, the organisation doesn't get the full intended impact of having the data science team.

One of the reasons this happens is due to a lack of communication. In the first place, "machine learning" and "artificial intelligence" are rather intimidating terms, sounding advanced enough to be equated with magic. Then, data scientists themselves do little to comfort the business people by explaining the models to them.

While the maths behind many machine learning techniques might be rather complicated (I don't understand most of it - given my aversion towards linear algebra), most commonly used methods are rather intuitive and the essence can be understood by most business people.

For example, logistic regression can be explained as giving a particular weight to each of the explanatory variables and then taking a weighted sum, and then making the yes/no decision based on whether this weighted sum crosses a threshold. Decision trees are even more intuitive to explain to business people, since they are commonly used in business strategy as well (random forests are less intuitive - among other things, business people are inherently suspicious of anything that involves randomness).

In other words, stripped of the mathematics, machine learning is actually not so hard to understand, and with some effort on the part of the data scientists, the business side can actually understand what they're doing. And when this happens, the business and the data sides of the organisation can interact rather closely, generating much better value for the organisation.

And when data scientists work closely with the business, and have to explain and occasionally justify their models, it leaves less room for "stirring the pile". More importantly, it leads to models that are easier for the business to understand, implement and take ownership of. It's a win all around (except maybe for some data scientists who now need to understand what their models are doing).

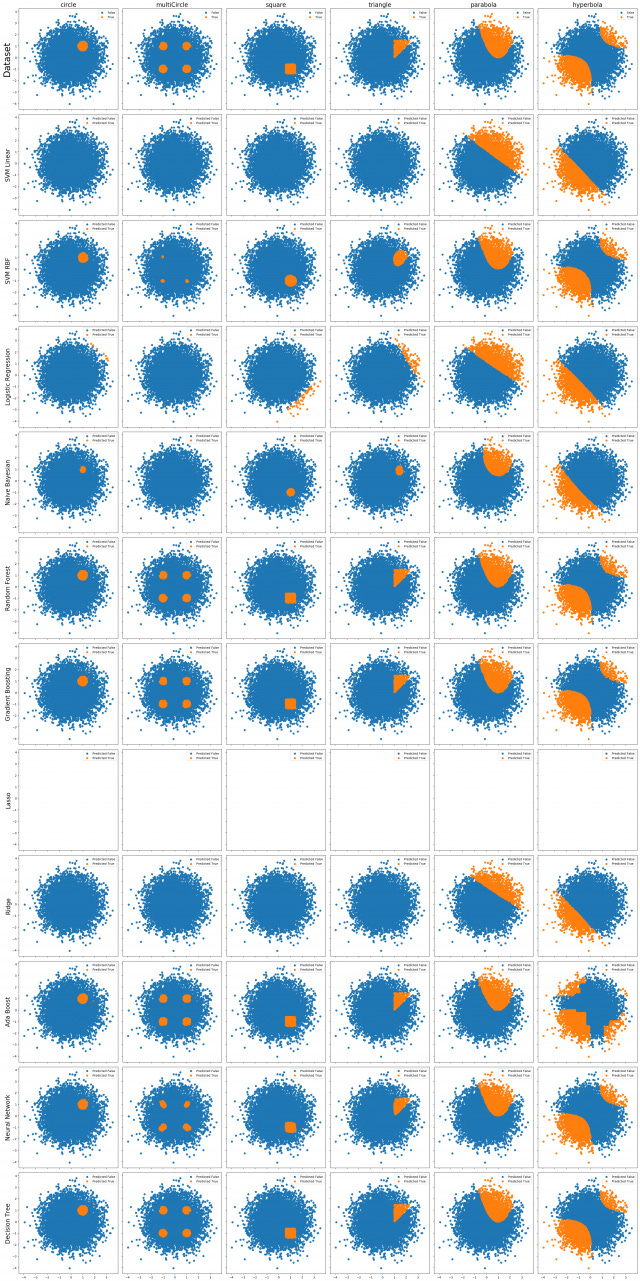

In any case, I've made a small attempt to make the life of pile-stirrers easier, by creating synthetic data sets that look like some familiar geometrical shapes, and then analysing which commonly used machine learning techniques can pick them out the best. The idea is to help develop intuition on what methods are best used in what situations. This picture gives a summary, but I encourage you to read the whole piece.

I've even uploaded some code on Github. Feel free to download and play around with it.

The Duckworth Lewis method

I know this newsletter is getting heavier on sport, but I guess that just reflects my biases and interests. Last month I read the joint autobiography of Frank Duckworth and Tony Lewis, who came up the eponymous method for setting targets for rain-affected cricket matches. While I've written a rather detailed blog post on the topic, being a book on analytics it merits mention in this newsletter as well.

Skipping all the details (which you can find in the blogpost), a couple of things stand out about the method. Firstly, while it is ubiquitous now, the way it got selected was rather random. The method was first proposed by Duckworth in an academic conference (on statistics), and developed further by Lewis as part of a student's undergraduate project. And then they managed to explain the method to the secretary of the England and Wales Cricket Board, and given that it was superior to everything else around (this was the mid-1990s, the dark days of the "highest scoring overs" rule) it got accepted.

It was as random as that. There was no bidding, no tenders, no competitive process. And the method still reigns supreme. There have been competitors (most prominently the "VJD" method proposed by V Jayadevan of Thrissur), but inertia, among other things has meant that DL survives.

Oh, I must mention that statistician Srinivas Bhogle, who first wrote a post that explained the Duckworth Lewis method, and then an account of why the VJD method is superior, is a subscriber of this newsletter. After finishing the book, I had a rather enlightening email conversation with Dr. Bhogle about cricket analytics in general, and the rain rule in particular. Dr. Bhogle also finds mention in the book - mainly thanks to his defence of the VJD method. If you're interested in cricket analytics, you should definitely check out his detailed blog post on the topic.

Coming back to the book, the other thing to point out is that Duckworth and Lewis hardly got paid for the method. The International Cricket Council (ICC) quickly adopted it and made it part of their intellectual property (to the extent that the software is not even widely available), but the founders weren't compensated much. While the rise of private teams (driven by leagues such as the IPL) might mean there might be more money in cricket analytics, it still seems like a rather random field.

Finally, I must mention that the Duckworth Lewis method seems like a pretty badly engineered piece of software. Until 2007, it only ran on DOS! I guess I don't need to say much more.

Oh, and speaking of cricket and analytics, I wrote a couple of IPL auction preview pieces for the Hindustan Times last month.

A backlash against Deep Learning?

As we discussed in the last edition of this newsletter, Deep Learning is probably the data story of the last decade. The availability of computing resources and vast amounts of data has meant that it has been possible to actually fit mathematical models that are "several layers deep" without giving rise to any inconsistencies or letting the solution blow up.

But as one would imagine with any technology that gets wide attention, it is being massively overused (I've had potential clients ask me for "deep learning based solutions" to rather simple problems), and that has brought its own backlash against the technique.

In the last edition, we saw this article in the MIT Technology Review that called Deep Learning "AI's one trick pony". Now we have the McKinsey Quarterly and Wired talking about the potential downsides of deep learning. Both pieces are very good.

The Wired piece is definitively bearish on Deep Learning. Consider this sample:

According to skeptics like Marcus, deep learning is greedy, brittle, opaque, and shallow. The systems are greedy because they demand huge sets of training data. Brittle because when a neural net is given a “transfer test”—confronted with scenarios that differ from the examples used in training—it cannot contextualize the situation and frequently breaks.

The McKinsey Quarterly piece, while still being bearish on Deep Learning, is a bit more solution oriented, in terms of giving some workarounds for all the problems with deep learning that are being listed out.

Obtaining large data sets can be difficult. In some domains, they may simply not be available, but even when available, the labeling efforts can require enormous human resources. Further, it can be difficult to discern how a mathematical model trained by deep learning arrives at a particular prediction, recommendation, or decision. A black box, even one that does what it’s supposed to, may have limited utility, especially where the predictions or decisions impact society and hold ramifications that can affect individual well-being.

In summary, it seems like deep learning is having a sort of reality check - there are situations where it works brilliantly, mostly in the field of computer vision, and we don't expect it to go away from there. However, soon enough the data requirements and lack of explainability will get to data scientists, and we will see less of deep learning in use in other fields.

This is all for the good.

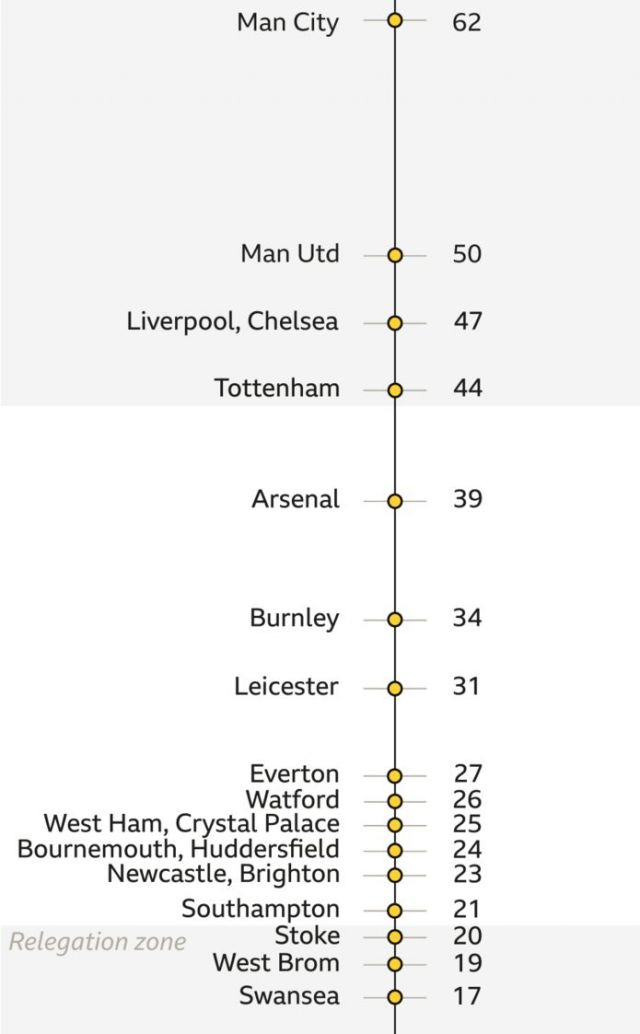

Chart of the Edition

This edition's chart comes from the world of football, via Aditya Bhaskar, a subscriber of this newsletter. It is a rather innovative way to show English Premier League positions. Normally league tables are represented exactly that way - like a table. Teams are ordered in descending order of standing, and a look at the table tells you relative positions of the teams.

Except that when you have a Premier League season like this one, where there is a large gap between numbers 1 and 2, and again between 7 and 8, and the relegation scrap includes half the league, the normal league tables can deceive. One day Newcastle are in the relegation spots, and then they win a game and go all the way up to 13th. It's that kind of a season. So BBC came up with this fantastic visual representation of the league table. Of course this sacrifices information such as games played (this is after 23 rounds), wins, losses, etc. but gives a clearer picture of which team stands where.

Aditya has taken this to another level, using a time-based version of this chart to analyse league performance.

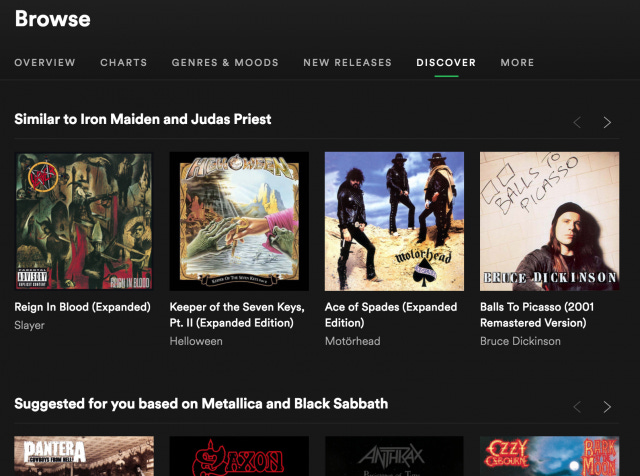

Spotify's clustering algorithm

I was about to send off this newsletter when I noticed this in my Spotify. Can someone enlighten me about how Spotify's clustering algorithm works? Do they use something like the Music Genome Project? Why does Spotify put Iron Maiden and Judas Priest in one group, and Metallica and Black Sabbath in another?

A request

I'm in the process of building a website for my newly-relaunched business. I'm looking for beta testers of the website who can give me feedback. If you're willing to take a look and provide feedback, do let me know. I'll send you the link and feedback form once the beta prototype is ready.

That's about it for now. Please share it if you like it, and keep the feedback coming! And again - if you want to beta test my website, do let me know!

Cheers

Karthik