The Art of Data Science: Chapter Sixteen

Hello!

Yes, this newsletter still exists. I know this edition has taken so long that it's almost time for the next one! Just happened to be a rare period of busy-ness earlier this month, but the upside of the delay is that I think I've collected some superior material. Here it goes.

Statisticians continue to get back at Machine Learning

Over the last couple of years, the balance in power in data science circles has shifted in favour of Machine Learning, and away from statistics. In fact, in several places it is now rather common to use the phrase "data scientist" synonymously with "machine learning engineer", and to put it bluntly, a lot of "data scientists" simply don't care about statistics.

Given this extreme shift in balance of power, it was inevitable that statisticians would start hitting back. In March, for example, a bunch of statisticians from Greece and Cyprus published a paper where they showed that conventional statistical techniques outperform machine learning techniques when it comes to time series forecasting.

To me, this isn't too surprising since statistical time series methods are purpose-built tools, while machine learning techniques are more generic tools applied, in this case, to a specific context. As I wrote then, using machine learning for time series forecasting is like using a screwdriver from a Swiss Army Knife, rather than using a dedicated screwdriver.

Apart from the central message, the paper is full of several interesting concepts, so I encourage you to read the whole thing.

Statisticians aren't done, though. A bunch of researchers from UC Davis and Stanford claim that neural networks (the base of deep learning, and which account for most of the hype about machine learning nowadays) can be approximated by polynomial regression!

From the abstract:

[...] one may choose to routinely use polynomial models instead of NNs, thus avoiding some major problems of the latter, such as having to set many tuning parameters and dealing with convergence issues. We present a number of empirical results; in each case, the accuracy of the polynomial approach matches or exceeds that of NN approaches

Then again, one of the successes of deep learning is that it learns well when there are a large number of predictors. It remains to be seen if that can hold with polynomial regression as well!

And while on the topic, Avinash Tripathi of the Takshashila Institution has written an excellent post about the collaboration between Machine Learning and Econometrics, and how the two fields are influencing each other. "Two cousins meet", it is called.

Thinking about Machine Learning

Benedict Evans of Andreessen Horowitz has an extremely interesting post on how we should think about machine learning. Overall, his assessment seems to be similar to my assessment that the phrase "machine learning" leads to people equating the concept with magic, and giving it some kind of superpower. It is more realistic to think about Machine Learning in terms of a "washing machine", he writes.

Washing machines are robots, but they're not ‘intelligent’. They don't know what water or clothes are. Moreover, they're not general purpose even in the narrow domain of washing - you can't put dishes in a washing machine, nor clothes in a dishwasher (or rather, you can, but you won’t get the result you want). They're just another kind of automation, no different conceptually to a conveyor belt or a pick-and-place machine. Equally, machine learning lets us solve classes of problem that computers could not usefully address before, but each of those problems will require a different implementation, and different data, a different route to market, and often a different company. Each of them is a piece of automation. Each of them is a washing machine.

Evans draws up another, more plausible, analogy to explain the ongoing revolution in machine learning - by comparing it to relational databases. I wouldn't be doing justice to the post by excerpting more samples from it - I would strongly encourage you to read the whole thing.

Meanwhile, here is a nice visual introduction to machine learning.

Improving line graphs

An interesting blog I've discovered in recent times is written by Eugene Wei, an early employee of Amazon. While most posts come with a long prelude on the early days of Amazon, there are a lot of very interesting insights. Such as this one on how to make Excel line graphs better, based on Edward Tufte's principles of visualisation.

The post is pretty hands-on, as it takes an example of a simple multi-line graph, and shows how you can make it better. If you are interested in visualisation, you should read it. Even the long prelude on Amazon's analytics package might be interesting!

And while on Tufte, I might have already recommended his masterpiece "Visual Display of Quantitative Information". It still remains the canonical book when it comes to visualisation. Wei summarises a few principles in his blog:

Graphics displays should

show the data

induce the viewer to think about the substance rather than about methodology, graphic design, the technology of graphic production, or something else

avoid distorting what the data have to say

present many numbers in a small space

make large data sets coherent

encourage the eye to compare different pieces of data

reveal the data at several levels of detail, from a broad overview to the fine structure

serve a reasonably clear purpose: description, exploration, tabulation, or decoration

be closely integrated with the statistical and verbal descriptions of a data set.

Graphics reveal data. Indeed graphics can be more precise and revealing than conventional statistical computations.

A couple of graphs

The Guardian has an interesting visualisation about the games so far in the FIFA World Cup, using a time-series bar graph to show the goal difference between the teams in each game. While it is certainly a novel way to show the data, I'm not so happy about bars being used on either side of the X axis - the information in bar graphs is in the length, and having them on both sides of the axis can confuse.

The shots taken graphic below the goals graphic is interesting, because it shows how the balance of play went though the game, the colour scheme (black background and all that) make it a bit hard to read! Or notice even.

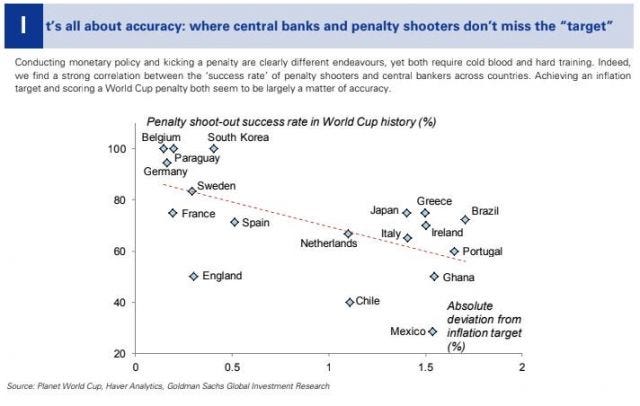

While at football, investment banks have produced their usual analyst reports trying to predict who will win the World Cup. Thanks to Mifid2, the reports aren't in public domain, but someone tweeted this scatter plot from the Goldman Sachs report. No complaints about the plot itself, but its a useful study in spurious correlation.

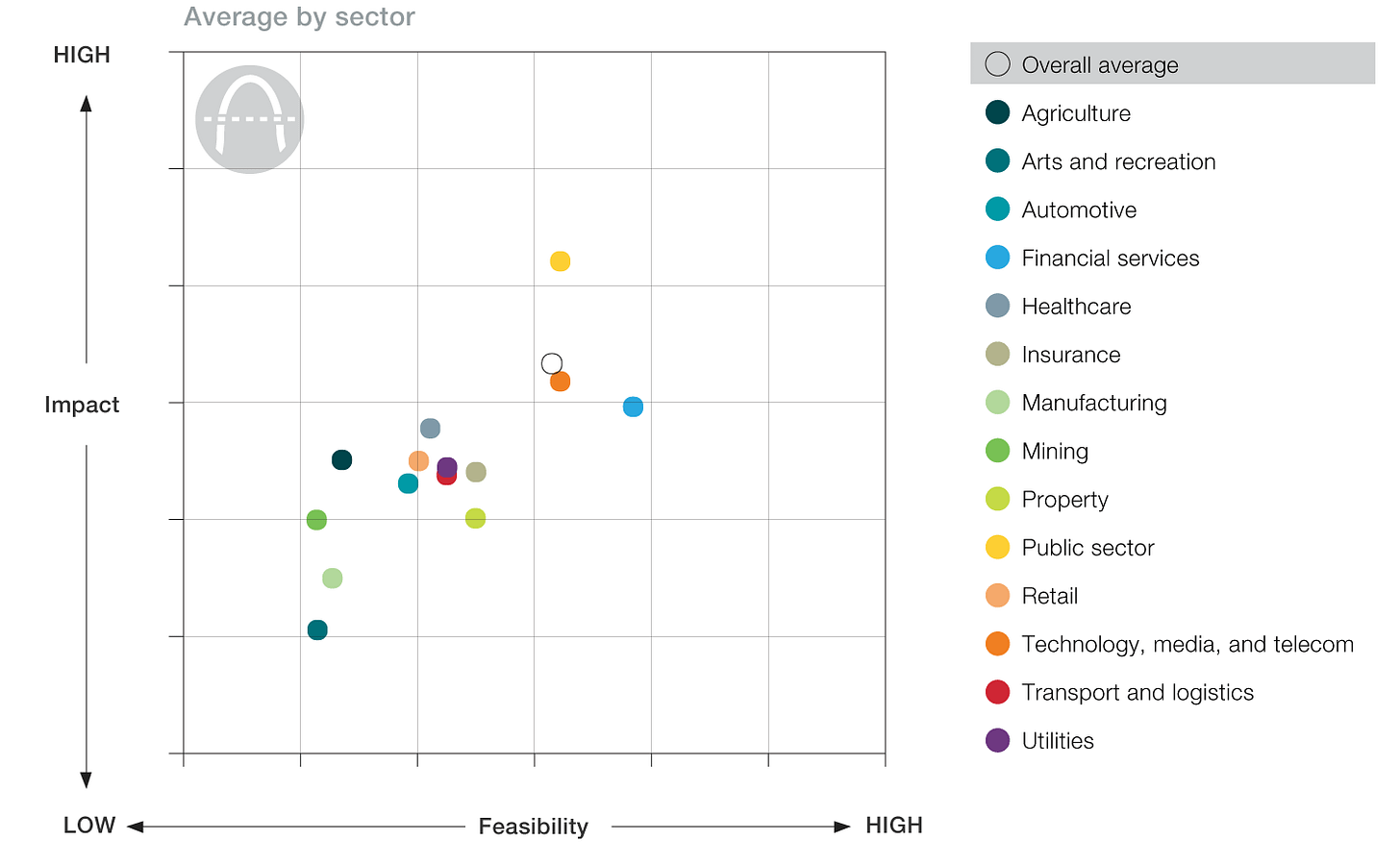

And McKinsey continues to outdo itself in producing graphics that are nearly impossible to read. And this time, they've actually done it with a scatter plot! It's an article on the strategic impact of blockchain. It started off well, but I couldn't continue to read it after seeing this graphic:

The usage of colour to display so many categories is unwarranted, and makes the reader constantly oscillate her neck from the graph to the legend. Categories could have been displayed much easier on the graph itself. And the colour scheme chosen also seems like a default, with lots of close colours, rather than one chosen for maximum contrast given the number of categories to show. Also, the plot is interactive, and it isn't clear on how to make use of the interaction! Once again, I urge you to read Eugene Wei's post, or one better, Tufte's book!

Among other things

Factor Daily profiles B Ravindran, a professor of IIT Madras who works on reinforcement learning. A few issues back, the Economist's Technology Quarterly had a special issue on data and surveillance.

Here in Europe, the GDPR regime is upon us, and companies are using the opportunity to do more marketing (to obtain "consent" to continue to receive emails), and sometimes blocking access. Check out the GDPR Hall of Shame, curated by Owen Williams.

And during my Bangalore trip in May, I made my debut as a TV pundit, analysing the Karnataka state elections for News9, a local channel. The highlight of my appearance was a 5-minute monologue on random sampling.

That's about it for now. This newsletter will be back soon, though unlikely on the 1st of July. So much for New Year Resolutions!

Cheers

Karthik Shashidhar

for Bespoke Data Insights Limited

http://bespokedatainsights.com