The Art of Data Science: Chapter Four

Programming note: This newsletter seems to be going into the "promotions" folder of a lot of subscribers' inboxes. In case you don't want that to happen, add the "from" address to your address book. And also let me know if there's something else I can do to prevent this from happening.

Foundations of Data Science

That's the name of the book I started reading earlier this week. It is written by three eminent Computer Scientists - Arvin Blum, John Hopcroft and Ravindran Kannan. The (draft) PDF version of the book is available for free download.

The authors make no bones about their ambition. As they make it clear right in the introduction,

With this in mind we have written this book to cover the theory likely to be useful in the next 40 years, just as an understanding of automata theory, algorithms, and related topics gave students an advantage in the last 40 years.

The book is highly technical and is by no means an easy read. On page 1 (after the introduction and other formalities) is a derivation of the Markov Inequality, quickly followed by derivations of the Chebyshev Inequality and the Law of Large Numbers. And that's possibly the easiest part of the book. I consider myself to be rather good at maths, but found myself skipping most derivations because I simply couldn't understand them.

I've done one parse of the book. I can't claim to have "read" it, since there was no way I could understand most of it. Here are some pertinent observations:

The Law of Large Numbers states that when you draw a large enough sample from a given distribution, the mean of the sample is a good approximation of the population mean. This concept forms the basis of sample surveys of all kinds (voting intent, expenditure, etc.).

While the law makes intuitive sense, it is hard to convince people that it works for something like income or wealth, which are distributed in an extremely unequal fashion (they normally follow Power Law distributions). This book clears the doubt by using a more rigorous definition of the law of large numbers - the sample mean is close to the population mean for large samples only if the variance of the population is finite.

And for most power law distributions, especially when the exponent is less than 2 (I'm not going into the maths here), the variance is not defined. For example, if something is distributed by the so-called 80-20 principle or Pareto distribution (where top 20% of the entities have 80% of <something>), the variance of <something> across the entities is not defined, and so the law of large numbers doesn't apply!The book has a chapter on "Machine Leaning". Considering that this is likely to become the canonical book on Data Science, and it has a chapter on Machine Learning, I guess we can say that Machine Learning is a subset of Data Science?

As I mentioned before, I consider myself to be fairly good at maths. And I find most of the maths in this book to be rather complicated and beyond my level of understanding / appreciation. There are parts I can understand (graph theory and other discrete stuff), but the maths in most of the book went over my head.

I assume this will be the case with a fairly large proportion of the Data Scientists out there. There is no doubt about this - the maths behind data science is hard, and well beyond most data science practitioners. However, given the number of organisations that need to make business sense out of data, the demand for data scientists is high.

So data science might end up as being one of those professions where a large majority of practitioners have no clue of the underlying maths and logic. In that sense, Data Science is less like Quantitative Finance (most of whose practitioners can be expected to know Random Walks and the Black-Scholes Derivation), and more like working in a factory, where most workers know little about the working of the machines they are operating!

Overall, I'm not sure I'll recommend you to read the book. It is written at a high level of abstraction, from a mathematical and computational perspective. Unless you're doing a PhD in data science (not using data science), I'm not sure you should be reading this!

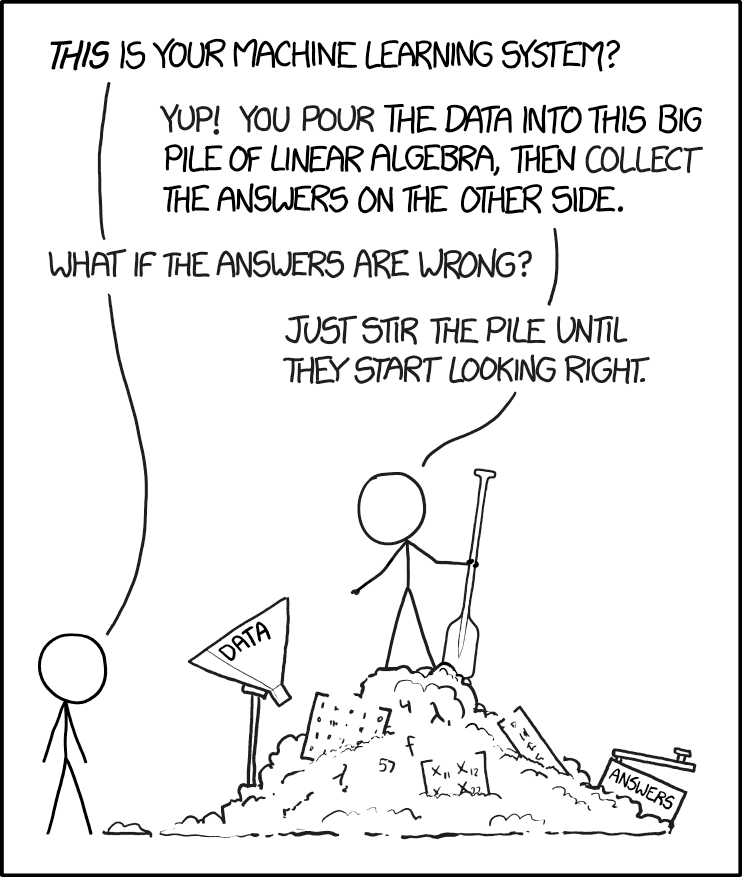

XKCD

Related to my rant last week on whether you should understand your models, XKCD has a nice comic out on machine learning. I'll leave it to explain itself. Based on my experience, I think this is quite accurate in terms of how a lot of machine learning is done:

Rating Systems

Recently I watched the first episode of the third season of Black Mirror. It's not as dark as the other Black Mirror episodes I've watched, and the premise is also not that far-fetched - there is a giant social network that everyone is part of where people can rate each other. Each person has a publicly known average score, and what they can do is a direct function of that. The episode is about this woman who wants to try improve her score beyond a particular cutoff.

The reason I thought the plot wasn't far-fetched at all is that rating systems are everywhere. You leave, and get, a rating every time you ride in Uber, for example. Apps are constantly prompting you to rate them on the app store. When you book a holiday, you look up the rating and ranking of every hotel on TripAdvisor, before you go ahead with the booking. Ratings guide which restaurants you go to, or what dish you order in. People even look at ratings while deciding who to date on OKCupid (read this excellent piece on what kind of people are most likely to get dates).

As I argue in my book (that is likely to come out in a month or two), rating systems are an extremely important feature in terms of increasing information flow between the buyer and the seller. Without ratings, you have little idea about a counterparty's quality apart from their self-professed advertisements. Ratings allow users of a platform to make a more informed choice on who to deal with.

For ratings to be effective, however, it is important that they are aggregated properly. Using a simple average, such as the arithmetic mean (as much as MS Excel tries to convince you otherwise, "average" and "mean" are not the same. Average simply refers to a measure of central tendency. Median and Mode are also averages), can lead to several issues (the Black Mirror episode illustrates some of them). For starters, different raters use different scales - my 4 is different from your 4. Then, ratings can be "stuffed" - you can pay people to downrate your competitor and uprate you. Third, people rate stuff they may not be too qualified to comment on, so identifying expertise and giving appropriate weights is yet another challenge.

Overall, designing a rating system - especially the aggregation algorithm - is an art. Given that ratings supplied by platform are effectively the platform's view of the quality of the thing being rated, it is important that platforms take this process seriously.

Chart of the edition

The (dis)honour of appearing in this section of this edition of the newsletter goes to the Economic Times, which has come up with this stupendous graphic on a survey the recently conducted. The newspaper's website itself is incredibly hard to search, so I'm relying on this screenshot posted on Twitter recently:

How are you even supposed to read this graph? I've ranted enough about Pie Charts, both in earlier editions of this newsletter and elsewhere, but this graphic takes the circular representation to new depths! In fact, it seems the graphic designer started off with the right idea, to use bar graphs rather than the pie charts newspapers prefer for such data. Then, along the way, perhaps they figured a bar graph takes too much space and then decided to curl them up? Again, a pointer to the quality of a graphic is whether you need the numbers in order to make sense of the graphic - and in this case it's clear that without numbers you can't make out anything!

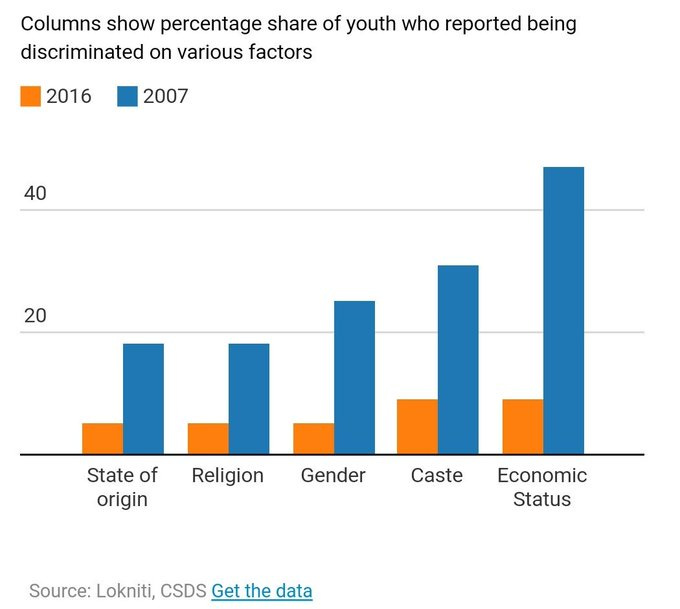

I should also give an honourable mention to this graphic from Mint, a newspaper I've been writing for for the last four years. Trust me, I didn't make this sneaky graphic that appeared a couple of weeks back:

Notice the problem? Basically they've put the bars for 2007 on the right of the bars for 2016. The convention is to put earlier dates on the left, so you would take a look at this graph and intuitively think that the situation has become far worse (rather than the other way round).

To their credit, they quickly corrected it, and the latest edition of the article on the website shows the bars in the correct order. This is a stellar example to show how simple changes in visualisation and conventions can lead to vastly different narratives.

Recommendations

I have only one recommendation for you this time - if you haven't read it already, you should read Sharon Bertsch McGrayne's The Theory That Would Not Die. This is the story of the Bayes Theorem, and McGrayne does a fabulous job of describing the fortunes of this (now rather popular) theorem. Along the way, she also talks about the differences in philosophy between "frequentists" and "Bayesians".

I have a fuller review of the book here on my blog.