The Art of Data Science: Chapter Eight

Plug

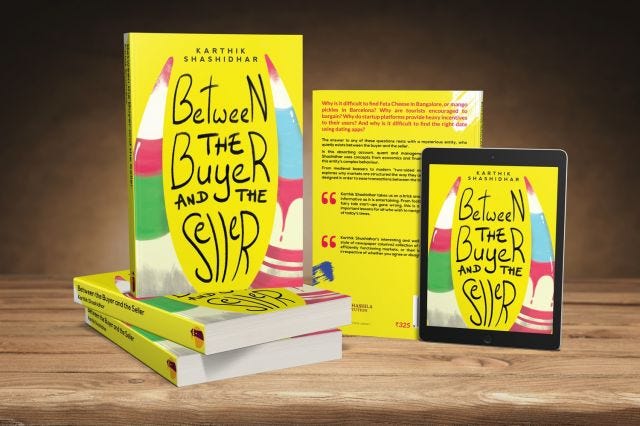

Before we get into the real "art of data science" business this edition, I want to first plug my book. Between the buyer and the seller has been published by the Takshashila Institution and is now available on Amazon, in both print and kindle.

Do buy, read and share!

Averages

A lot of people simply assume that when you say "average", it can only refer to the arithmetic mean (sum of all values divided by the number of values). This, I believe, is the result of bad nomenclature on the part of both Microsoft Excel and SQL, which use the word "average" to refer to the arithmetic mean The Oxford English dictionary defines the average as:

a number expressing the central or typical value in a set of data, in particular the mode, median, or (most commonly) the mean, which is calculated by dividing the sum of the values in the set by their number.

In other words, while the mean is the most commonly used average, the two terms are not synonymous. To put it simply, "mean" is an average, but average is not necessarily the mean. Median and mode are just as legitimate as averages (as are the geometric and harmonic means) as the arithmetic mean, and each of these measures have their own usage.

While there are different rules (mostly heuristics) about what measure of average to user where, a simple rule of thumb is that the average is the one number that best represents the entire set of numbers. For starters, remember that the average destroys information - we are taking information conveyed by a set of numbers and condensing that into one number. From this perspective, what is the number that we need to choose so that we preserve as much information as we can?

Again, what this convention tells us is that the measure of average to be used is not only conditional on the data set, but also on how the average is going to be used - for subsequent steps in the analysis might destroy information in different ways, and the average should thus be chosen such that overall, as much information as possible is preserved.

But coming back to the heuristics that I summarily dismissed a couple of paragraphs above, the mode is useful when dealing with categorical data (after all, we can't define mean and median for such data), data that has been categorised into different ranges - from a histogram, for example, the mode is the most "obvious" number.

The median is of use when dealing with highly skewed distributions and those with lots of outliers - income or number of twitter followers are good examples. The geometric mean is useful when dealing with data that gets compounded, such as interest rates. And so forth.

In other words, taking an average, on average, is more of an art than a science. It is the one number that best describes the set.

It is not always the arithmetic mean.

Python Graphics

This is a topic that I'm unlikely to stop commenting about for a while, so please bear with me. I continue to use Python, and have gotten more and more comfortable with it. Thanks to some helpful colleagues, I've been learning to write code in the "pythonic way". But one thing in which I continue to struggle with Python is when it comes to graphics.

Graphics and visualisations are extremely intuitive in R - the native plotting tool is itself fairly good, and when you use an extension such as ggplot2, you can literally do "gymnastics" with the plots. There is a really wide variety of plots available at your disposal, most of which can be drawn using rather similar-looking code (the 'gg' in question stands for "grammar of graphics"). And exporting plots to whatever format I want to is also a piece of cake.

All it takes is

pdf/jpg/png('myplot.png',width,height)

<all the code to produce the plot>

dev.off()

And this can be used to put plots in whatever format you want, including PDF. Easy tools exist to put multiple plots on the same page. In case you want to create nicely formatted reports, that's trivial to do as well.

But then when it comes to python... (remember that thanks to the ease of machine learning, enterprises are more likely to lean towards Python for data science work). Plotting is a massive pain. For starters, there is no "default" plotting infrastructure (remember that Python is a generic programming language). The "default" that people use (matplotlib) is way too verbose. Ggplot exists but nowhere as rich or simple as it is for R. There's Seaborn which is pretty but has mindnumbing syntax (different kinds of plots have their own syntax).

Anyways, check out this rather hilarious description of the plotting infrastructure in Python. If you want me to save you a click, there's no "clear winner" here - each package has its own set of drawbacks.

Oh, and formatting multiple pictures into a grid or exporting it into PDF can bring untold miseries. Ok I'll stop ranting here.

Bar graphs as time series

No specific chart for this edition, so I'll talk about methodology. One of the conventions in visualisation is that if you want to show a time series, you should use a line graph. The x-axis shows time and the y-axis shows value, and all the values are joined up by a line. The most common example for this, of course, is the stock market chart. The idea is that it makes it easy to compare a value across time, and also easy to compare across values.

Newspapers constantly break this "convention", using bar graphs instead to convey time series data (I suspect it's because high school maths teaches bar graphs but not line graphs or scatter plots). Usually I'm critical of such graphs, since they don't normally allow you to see the trend. This is especially true if multiple series are plotted on the same graph, in a "dodged" manner. Like this one, for example:

The problem with using bar graphs to represent time series data is that there are so many bars that they don't allow you to see the trend. At best, for each time point, they allow you to compare the different quantities, but comparison across time is extremely unintuitive. A line graph not only allows for easy comparison but also "consumes much less ink" (ink ratio in a future edition).

As I recently discovered, though, there is one special case where it makes sense to use a bar graph for a time series - when there are lots of zeros in the data. In this case the interpolation can be a bit misleading - you want to plot points showing the exact values for each day.

One problem with line charts is that missing data is glossed over. So if there's no data for today, the line joins yesterday's point directly with tomorrow's. So visually it appears that today's value is somewhere in between.

Now, for something like a stock price chart, this is perfectly okay. However, if you are plotting something like the number of users who visited your site each day, you want to show zeros or missing data as zeros, and that makes the line graph looks messy.

Nevertheless, I'd use the bar graph to show time series either if there is only one time series, or if the values can be stacked on top of each other. In other words, for each time point, there should be only one bar!

That's it for this edition! Please keep the feedback coming. And share this newsletter with whoever you think might like it.

Regards

Karthik