Season 3 Episode 1: Fast Iterations

The fundamental feature of Data Science is experimentation, and you need to set up your workflow in a way that you can do a lot of it quickly.

Welcome back to The Art of Data Science. I know it’s been a long time coming. I started (and quickly ended) a season 2 in 2021, soon after I had started a full time job in Data Science. Basically life came in the way.

Recently, I finished two years in the job, and turned forty (the two coincided), and have decided that I need to remake my portfolio around my “principal component” that is my job.

In the two weeks since I made this resolution, this has been far more easier said than done. Work is hectic as usual (I came home post 7 each day this week). There are other commitments. I’ve been trying to meet people. All this together means less time than I had expected to blog (which I’ve been regular at) and write this newsletter.

But I promise - Season 3 (had to start afresh after the disaster of Season 2) will endure longer than Season 2. I’ll figure out a system where I publish exactly once in 2 weeks (irrespective of when I write). If you know of one such system, let me know. Also, I’m changing up the format to make the posts shorter. I’ll only talk about one thing at a time. So let’s begin.

Fast Iterations

All opinions expressed in this entire newsletter are my sole personal opinions and do not in any way reflect those of people / organisations I might be associated with

Data Science

Around the same time Season 2 failed to take off, I started a podcast. I called it “Data Chatter”, and it lasted 16 episodes (so a good full season). Each episode I would interview someone working in/around data. I had a whole load of fun recording it. I had less fun editing the thing (one big reason I “put NED” and never did a season 2).

One of these episodes was with my high school friend Dhanya, who runs parallel tracks as a neuroscientist and a data scientist (and she also runs). I interviewed her on why so many PhDs get attracted to academics. Her answer was interesting.

I also wrote a blogpost about this. Basically, Dhanya’s funda on what attracts PhDs in experimental subjects (such as physics, biology and neuroscience) to data science is that data science is also fundamentally about experimentation. In research, you set up experiments, collect data, analyse data and test hypotheses. In real world data science, you identify “natural” experiments and analyse (or should I say “torture”?) the data your company has already collected to test hypotheses and make business decisions.

This, of course, is very different from the way a lot of real world data scientists nowadays think about data science - as pretty much an extension of software engineering. However, this definition of data science (as experimentation) is something I’m heavily partial to.

The more the experiments, the merrier

If you, like me, consider that experimentation is key to data science, then that has a major bearing on how you set up your tooling and systems. Ceteris paribus, the more the experiments you do, the better. This means, the more the experiments you do in unit time, the more data science you can do in unit time, and thus the more productive data scientist that you can be.

The implications of this are that you need to be careful in terms of how you design your systems and paradigms and workflows.

Preprocessing

For example, let’s say your model has an expensive (in terms of running time) Step A followed by a relatively cheap Step B. Let’s say you are trying to experiment with and optimise Step B. Now, if you had to run both A and B for each experiment, then your experiment would be really slow.

Instead, if you were to preprocess step A and store the result somewhere, you can iterate far far faster with step B alone, and get the results quickly. Running A multiple times is basically a waste of time and compute power and processing power.

Saving intermediate results

Let’s flip this around. What if Step B is expensive, and Step A is cheap? Now, if you are experimenting with Step A, running Step B each time will unnecessarily slow you down. Instead, can you store the results of Step A each time and analyse them, and then develop heuristics on how good the results are? That way, your dependence on Step B largely vanishes and you can iterate faster.

Using more processing power

Sometimes, we tend to take the resources given to us as a given, and assume that we cannot change the tooling we have. For example, let’s say you have a process that needs massive computation each time, and your server is slow. You might just assume that because the server is slow, you can just take longer to finish this task.

Instead, you can understand what it costs to spin up a bigger server for your experiment, and estimate how much of your effort it cuts down. You know your own salary, so it is easy tradeoff to make on whether it is cheaper for your company to rent this new (and expensive) server or let you take longer to finish. After all, you are a data scientist - so figuring out this tradeoff should be trivial for you.

Optimal data downloads

In my short lived Season 2, I had mentioned that my then team (now a subset of my current team) used RStudio mounted on an AWS server, and then dbplyr to do our data work.

Now, this is again an issue of preprocessing. Each hit to the central data warehouse to get the data is slow. However, keeping too much data on your own server (especially if you’re using R, which is memory intensive) will slow you down. So the trick is in understanding how much data to get at a time (and what cuts) so that you don’t keep hitting the data warehouse, and can also do your analysis quickly.

Scripting

In general, I’m a big fan of using MS Excel. However, one issue is that it doesn’t allow you to script end to end (or maybe I haven’t tried - if you know, please let me know). For example, let’s say you want to run the experiment on more recent data. You need to have your experiment set up so that pulling the data and processing it entirely until your experiment is completely automated.

That way, even if you make changes that necessitate you to re-run the entire pipeline, all it takes is one set of keystrokes. And in my experience, the most common culprit that ruins this automation change is to do your data download from outside of your main model script (again don’t know if VBA on Excel can be integrated to run SQL scripts and fetch data).

The SQL-Excel stack

At the time of writing this, I’m hiring, for data scientists at all levels. The odd CV I get is from people who are very good at SQL and Excel, but not in any scripting language (such as R, Python or Julia). While I entirely appreciate both SQL and Excel, the reason I seldom proceed with such candidates is that this stack is simply not amenable to scripting and fast iteration.

The issue with SQL + Excel is that there is a natural limit to the amount of data that Excel can handle. And given that scripting is not intuitive on Excel, each iteration has to include downloading the data in the first place, loading it to Excel and then manually running all the pre-processing and then doing the analysis. Which means you can’t do fast iteration, which means there is a limit on how much experimentation you can do.

Closing Notes

Having written this much, I’m wondering if this is becoming a bit too prescriptive. Then again, the reason I had originally started this newsletter in 2018 was to pontificate on my idea of data science.

I might change the tone as we go through the season, but let me look at the feedback to the next couple of episodes, and then figure out how to go ahead.

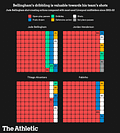

I’m not yet sure if this season will have a “visualisation of the episode”, but for the first one, I simply had to go to the first Athletic article I was reading ($), and take the first graph from it. Most of them are hard to make sense of.

I’m not even going to try and understand this.

Understanding data science from the perspective of experimentation & measurement makes sense.

Hey Karthik, very informative post. Thanks ! With regard to automating the data pull and processing within Excel, do check out Power Query ("Get Data"). It is immensely powerful, and it does away with the need for all that shady ADO code. And I loved the (challenge of the) chart you included too :) a simple clustered bar chart or even panel bar chart would've been much more informative than the waffles they've currently used.