Season 2, Chapter 1: Data Science and Business Intelligence

As I begin a new chapter in my professional life, it is time to start a new season of this newsletter

Much has changed since I sent out the last edition of this newsletter. Most significantly, 2020 came and went.

Then, I embarked on a new career. After nearly 9 years (more or less) of freelancing and leading a portfolio life, I took up a full time job. I have joined Delhivery as Head of Analytics and Business Intelligence. Before I proceed further, I must mention that all opinions expressed in this newsletter (and in subsequent chapters of Season 2, which will continue as long as I’m employed by Delhivery) are my own, and do not in any way reflect the opinions of my employer.

OK, so I’m Head of Analytics and Business Intelligence. I’m NOT Head of Data Science. That is a different role, occupied by a different person. What is the difference, you may ask, having gotten used to the sort of continuum we see in most Indian companies where there is one “data team”.

The simplest way to explain the difference is that Data Science is tech-facing, while Business Intelligence is business-facing (I had written about this dichotomy a long time back, though I had classified both as “data science”. This was before I had decided to stop calling myself a data scientist). Data Science expresses its insights in the form of code, while Business Intelligence expresses it in the form of business inputs.

For example, when you send a package through my company, there is an algorithm that determines what route it will take to its destination. That algorithm has been developed by Data Science. Another algorithm determines if you have written the address correctly, and if it needs to be rectified. Data Science again. Yet another algorithm tells the delivery person, based on the address, where exactly to go. Data Science once more.

So what really does Business Intelligence do? We recommend. We give inputs to the business. We don’t “direct”, but try to give helpful inputs. Like how many delivery persons a particular delivery centre should employ. Or how to set their bonuses. Or where these delivery centres need to be. Or how to price a new client. Or what to talk to a client about.

As you can see, all of this is “inexact stuff”. And it all requires inexact solutions. The final solution in each case is a combination of what the data says and some amount of human judgment - and this is usually judgment that cannot be outsourced to an algorithm. Like I might be able to tell you the most optimal spot to locate a delivery centre, but finding the appropriate real estate and striking a deal requires a human to take over. My algorithm might recommend that we charge this client ₹150 a parcel, but the salesperson doing the deal might decide it’s okay to do it at ₹140 instead.

Before we proceed, here is a quote that I had quoted in one of the earlier editions of this newsletter. This is from an old blogpost by Kaiser Fung.

The data science community is guilty of talking down on the business intelligence function. There is a misperception that BI is for less skilled people doing boring things. The reality is there is more science in BI than in so-called data science (defined here as software engineering). Science, after all, is about figuring out why things are as they are. Engineers, by contrast, use our understanding of science to change the way things are.

I suppose now you have a better idea of what I, and my team, do. Oh, and we are always hiring.

Arbitrage

Before I took up this job, a lot of my formal employment (not that there’s much of it to talk about) was in financial services. Specifically, I used to work in capital markets (which is what makes me inherently wary about writing about my work in public forums like this). And in the capital markets, you are always on the lookout for “arbitrage” - opportunities to make risk-free profits.

While that direct kind of (monetary) arbitrage is not really present in my current job, I see arbitrages of a different kind - basically in several situations, the work setting and the problem that we’re working on means that there are things we can do more cheaply than otherwise. And yes, a lot of this has to do with analytics and business intelligence.

Dashboards

For example, consider dashboards (I had written this blogpost about them a long time back). Now, every business leader wants a dashboard so that one look at it will tell her how her business is doing. However, not everything needs to be a dashboard. The only places where you really need a dashboard are where you need real time information.

If we have to track how many packages we are moving at a point in time, we need a dashboard. If we want to know where each truck is, we need a dashboard. However, to tell you how many parcels we delivered yesterday, I don’t need to give you a dashboard, since that number doesn’t change through today (or tomorrow or … ). What we need is a report. A “periodical” that is run at the beginning of today that counts how many packages we delivered yesterday.

And once we remove the constraint for a dashboard to be “live”, it can be easily substituted by a report, which is far cheaper to produce (both in terms of programming complexity and data transfer). That is one example of an “arbitrage” we use liberally.

Inexact Solutions

Measure with a micrometer, mark with a chalk and cut with an axe.

I don’t know the source of the saying but it is common business folklore, and appropriate in this context. When you know that the result of your analysis is a solution that is inexact, and that is likely to be modified by a human before it gets implemented, it puts a limit on how complex or accurate the solution needs to be.

To paraphrase, when you know that you are going to cut with an axe, you can dispense with your micrometer, and maybe use a crude ruler. Knowing the context and how your solution will get used can result in far better decisions on how you construct the solution, and in most cases, when you know that you don't need a particularly exact solution, you can get the solution with less effort.

Knowing we don’t need to be exact is a source of arbitrage for us, and allows us to spend far less effort on things.

And so the list of arbitrages goes on, but you get the hang of it. You can look for the peculiar context in which you are working, and optimise your work for that. Yet another “arbitrage” is that since my team’s work doesn’t get productionised in code, we can use R, rather than the more engineering-friendly (but data analyst-unfriendly) Python.

The dbplyr superpower

In my company we deal with really big data. I’m told we produce a terabyte of data a day (and this is just what we put into our data lake). The amount of data is so large that I usually end up building models on 15 days of data - any more and the amount of time taken to model will be inordinate.

Also, the large amount of data that is present means that I have eschewed my model of loading all the data into my R workspace, and then working on it. Instead I pull data from the database as and when I require it.

Now, normally, the way to pull data from the database would be in terms of writing SQL queries. However, I find that inefficient. I mean - SQL queries are intuitive (when I first learnt to write them, in 1998, I was fascinated by how “natural” the syntax seemed). However, the moment you want to do some reasonably complex analysis (anything exceeding one nested query, or one join), SQL code can become really hard to read. And harder to debug.

And that is where dbplyr, which I had read about a few years back, comes in.

With dbplyr, you can write code as if you are writing a typical R (or tidyverse) statement, and the code automatically gets converted to SQL and sent to the database server. This way, all your code can be written directly in R, which is far easier to understand and debug (I admit I’ve been using it since 2008), and we also don’t spend an inordinate amount of time writing potentially buggy SQL queries.

Interestingly, this is the first time I’ve actually used dbplyr, and I sometimes feel that I have a sort of “illegal superpower” or “cheat code” because I use this. Exploratory data analysis involving databases is far easier. I spend far less time writing code, and I write cleaner code (SQL inherently looks ugly). I can exploit the computing power of our database server to augment the capacity of the machine I use for my work. And so on and so forth.

What I find fascinating is that I don’t know anyone else (apart from the people in my firm whom I’ve taught) who actually uses dbplyr. Forget dbplyr, I was actually hard-pressed to find people who work heavily with data and use R (most people I know use Python, but then again they’re more on the “data science” side of things).

And this sometimes makes me wonder if there is a catch (there is one - dbplyr code translated to SQL is not as efficient as natively written SQL queries. Then again, we have this arbitrage that we don’t do production work, so a little inefficiency doesn’t hurt).

It somehow feels too good to be true.

Visualisation of the week

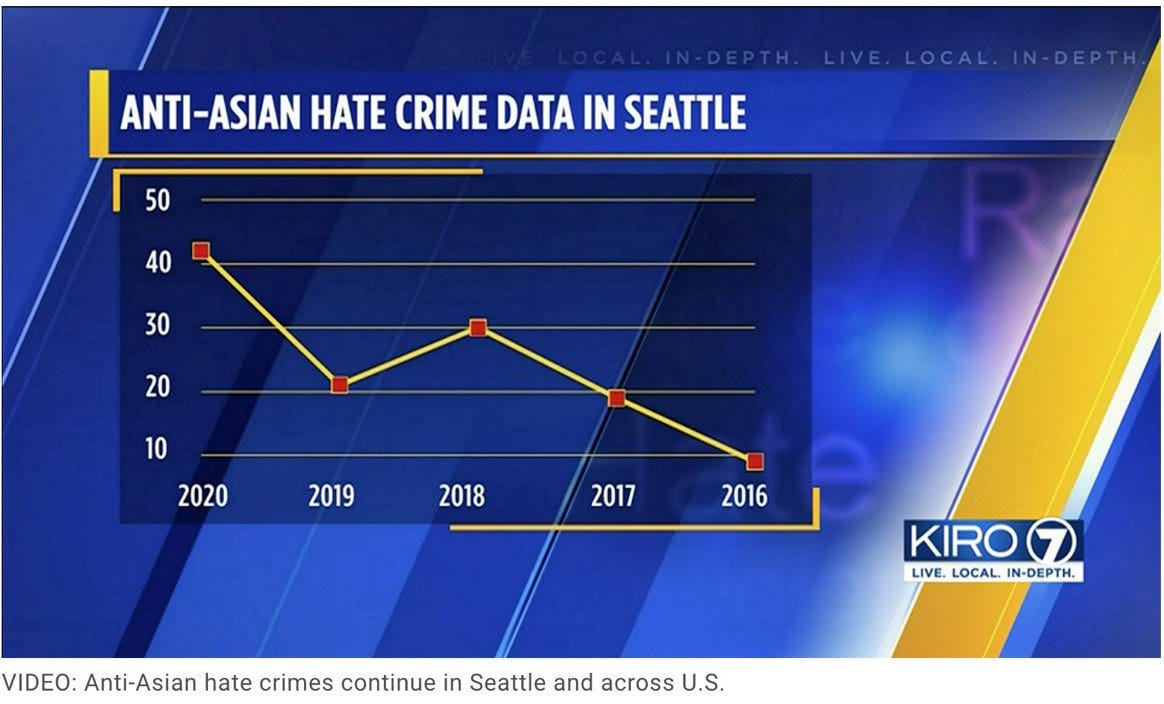

A lot of bad visualisations are results of incompetence, rather than malice. However, this one, which I came across on Twitter, seems like a clear case of malice.

Why would you write the X axis in decreasing order unless you wanted to give a false picture to people who don’t bother looking at the labels?

That’s it for now. This is a new experience for me - writing about my work in a public forum. However, I hope to keep this up. I still don’t promise any frequency for this newsletter - I’ll write as and when I think I have something to say.

Oh, and I must remind you - I’m hiring. We’re doing a lot of exciting work, and there are a lot of interesting problems coming our way. Get in touch if interested, and if you think you are good at R :)

Cheers!